我们使用机器学习技术将英文博客翻译为简体中文。您可以点击导航栏中的“中文(简体)”切换到英文版本。

尝试使用亚马逊 OpenSearch Service 矢量引擎进行语义搜索

自 2020 年推出 kNN 插件以来,

OpenSearch 服务支持各种搜索和相关性排名技术。 词法搜索 在查询中出现的文档中 查找单词。 由向量嵌入支持的@@ 语义搜索 将文档和查询嵌入到语义高维向量空间中,在该向量空间中,具有相关含义的文本位于向量空间中,因此语义相似,因此即使它们与查询不共享任何单词,它也会返回相似的项目。

我们在公共的

背景

搜索引擎是一种特殊的数据库,允许您存储文档和数据,然后运行查询来检索最相关的文档和数据。最终用户搜索查询通常由在搜索框中输入的文本组成。使用该文本的两个重要技术是 词汇搜索 和 语义搜索。 在词法搜索中,搜索引擎将搜索查询中的单词与文档中的单词进行比较,逐字匹配。只有包含用户键入的全部或大部分单词的项目才与查询相匹配。在 语义搜索 中 ,搜索引擎使用机器学习 (ML) 模型将源文档中的文本编码为高维向量空间中的密集向量;这也称为将文本 嵌 入 向量空间。它同样将查询编码为向量,然后使用距离度量在多维空间中查找附近的向量。查找附近向量的算法称为 kNn(k 最近邻)。语义搜索不匹配单个查询术语——它会查找向量嵌入在向量空间中的向量嵌入位置附近的文档,因此在语义上与查询相似,因此即使这些项目高度相关,用户也可以检索查询中没有任何单词的项目。

文本向量搜索

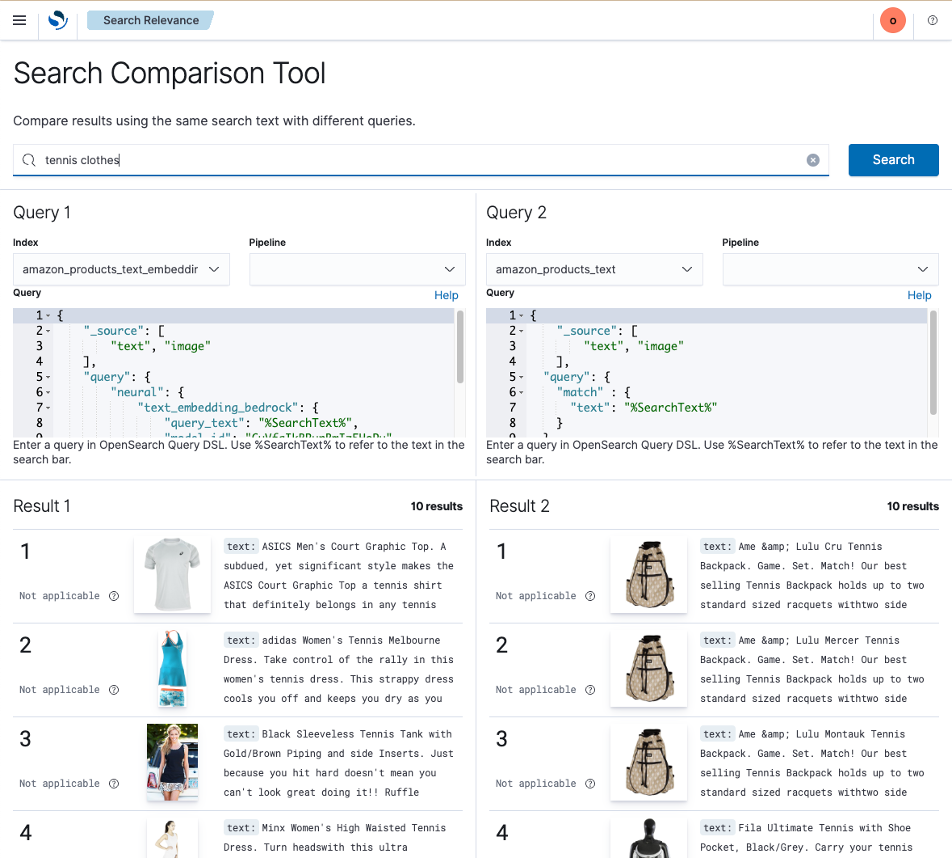

在顶部的文本框中,输入查询

网球服装

。

在左边(查询 1),有一个使用亚马逊_产品_text_embedding索引的 OpenSearch DSL(用于查询的域名特定语言)语义查询,在右边(查询 2),有一个使用

你会看到,词汇搜索并不知道衣服可以是上衣、短裤、连衣裙等,但语义搜索确实如此。

亚马逊_产品_文本

索引的简单词汇查询。

比较语义和词汇结果

同样,在搜索温 暖天气的帽子 时 ,语义结果会发现很多帽子适合温暖的天气,而词汇搜索返回的结果中提到 “保暖” 和 “帽子”,所有这些都是适合 寒冷 天气的保暖帽子,而不是温暖天气的帽子。同样,如果你正在寻找长袖连衣裙,你可以搜索 长袖连衣裙 。词汇搜索最终找到了一些长袖短裙,甚至还有一件儿童正装衬衫,因为描述中出现了 “连衣裙” 一词,而语义搜索却发现了更多相关的结果:主要是长袖长裙,但有一些错误。

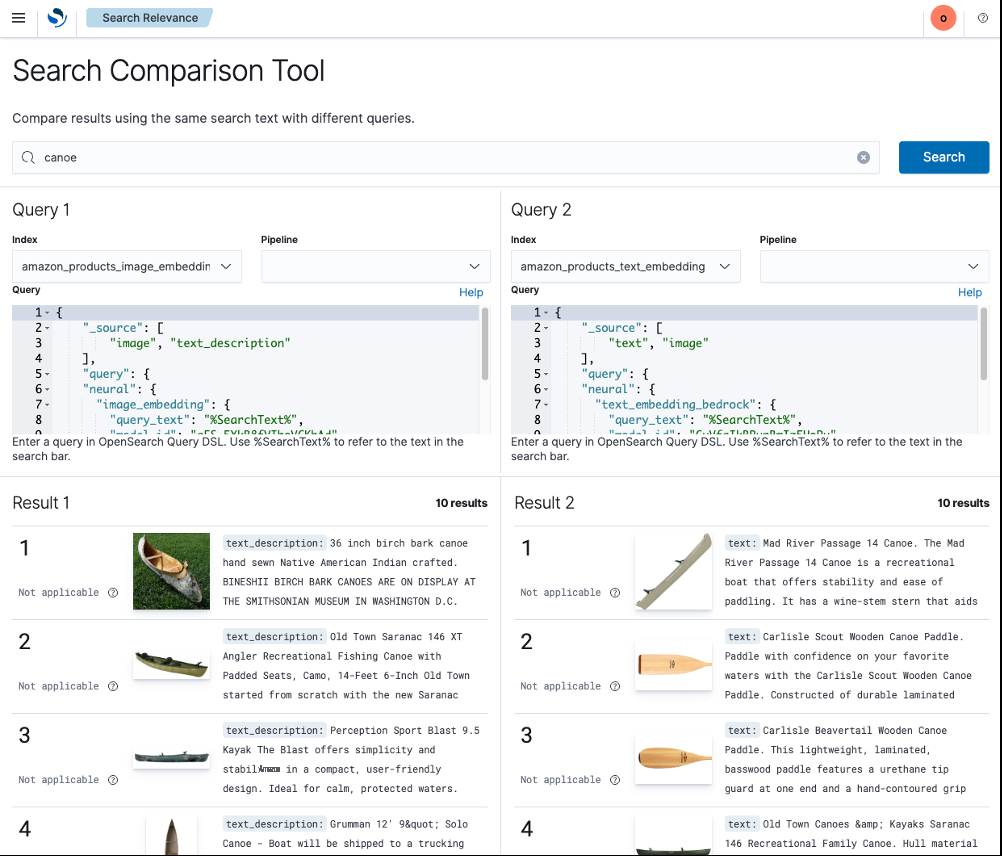

跨模态图像搜索

比较图像和文本嵌入

例如, 帆船鞋 在两种方法上都能很 好地发挥作用,但是如果使用视觉相似度, 白色帆船鞋 的 表现要好得多。查询 独木舟 主要是使用视觉相似度来 查找独木舟(这可能是用户所期望的),但使用文本相似度混合了独木舟和划桨等独木舟配件。

如果您有兴趣探索多模态模型,请联系您的 亚马逊云科技 专家。

使用语义搜索构建生产质量的搜索体验

这些演示让你了解了基于向量的语义搜索与基于单词的词汇搜索的功能,以及利用 OpenSearch Serverless 的矢量引擎来构建搜索体验可以实现什么。当然,生产质量的搜索体验会使用更多技术来改善结果。特别是,

结论

OpenSearch 服务包括一个支持语义搜索和经典词汇搜索的矢量引擎。演示页面中显示的示例显示了不同技术的优缺点。在 OpenSearch 2.9 或更高版本中,您可以对自己的数据使用搜索比较工具。

更多信息

有关 OpenSearch 语义搜索功能的更多信息,请参阅以下内容:

-

亚马逊 OpenSearch 服务的矢量数据库功能详解 -

在 OpenSearch 中构建语义搜索引擎 -

OpenSearch 中语义搜索的基础知识:架构、基准测试和组合策略 -

使用 OpenSearch 作为矢量数据库 -

合作伙伴亮点:使用 OpenSearch 设置使用文本和矢量搜索的混合系统 -

神经搜索文档

作者简介

斯塔夫罗斯·马克拉基斯

是亚马逊网络服务OpenSearch项目的高级技术产品经理。他热衷于为客户提供提高搜索结果质量的工具。

斯塔夫罗斯·马克拉基斯

是亚马逊网络服务OpenSearch项目的高级技术产品经理。他热衷于为客户提供提高搜索结果质量的工具。

*前述特定亚马逊云科技生成式人工智能相关的服务仅在亚马逊云科技海外区域可用,亚马逊云科技中国仅为帮助您发展海外业务和/或了解行业前沿技术选择推荐该服务。