我们使用机器学习技术将英文博客翻译为简体中文。您可以点击导航栏中的“中文(简体)”切换到英文版本。

通过 Amazon Kinesis Data Firehose 将 VPC 流日志传输到 Datadog

通常将客户的应用程序和服务生成的日志存储在各种工具中。这些日志对于合规性、审计、故障排除、安全事件响应、满足安全策略和许多其他目的都很重要。您可以对这些日志进行日志分析,以了解用户的应用程序行为和模式,从而做出明智的决策。

在亚马逊网络服务 (亚马逊云科技) 上运行工作负载时,您需要分析

使用

Datadog 是一个监控和安全平台和

Datadog 使您能够轻松浏览和分析日志,从而更深入地了解您的应用程序和 亚马逊云科技 基础设施的状态。您可以

在这篇文章中,您将学习如何将 VPC 流日志与 Kinesis Data Firehose 集成并将其交付给 Datadog。

解决方案概述

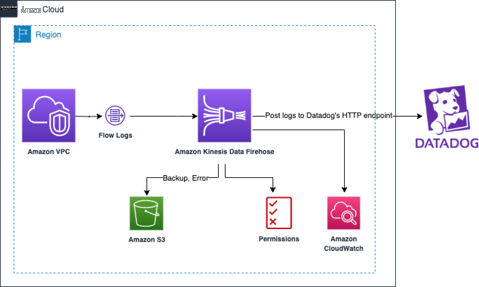

该解决方案使用了流向 Kinesis Data Firehose 的 VPC 流日志的本地集成。我们使用 Kinesis Data Firehose 传输流将流式传输的 VPC 流日志缓冲到您的 Datadog 账户中的 Datadog 目标端点。您可以将这些日志与 Datadog 日志管理和 Datadog Cloud SIEM 一起使用,分析云资源的运行状况、性能和安全性。

下图说明了解决方案架构。

我们将引导您完成以下高级步骤:

-

将您的 亚马逊云科技 账户与您的

Datadog 账户关联起来。 - 创建 Kinesis Data Firehose 流,VPC 服务在其中流式传输流日志。

- 创建 Kinesis Data Firehose 的 VPC 流日志订阅。

- 在 Datadog 控制面板中可视化 VPC 流日志。

这篇文章中使用的账户 ID 123456781234 是一个虚拟账户。它仅用于演示目的。

先决条件

您应该具备以下先决条件:

-

亚马逊简单存储服务 (Amazon S3) 存储桶,用于存储 Firehose 传输流备份和失败日志。 -

需要一个 Datadog 账户。如果您还没有帐户,请访问Datadog网站注册

14天 免费 试用。 -

向 D

atadog 提交日志 所需的 Datadog API 密钥 。 -

亚马逊云科技 身份和访问管理 (IAM) 权限,用于创建和修改 IAM 角色和策略。

将您的 亚马逊云科技 账户与您的 Datadog 账户关联以进行 亚马逊云科技 集成

按照 Datadog 网站上 提供的

创建 Kinesis Data Firehose 流

现在,您已完成与 亚马逊云科技 的 Datadog 集成,您可以按照以下步骤创建 Kinesis Data Firehose 传输流,在其中流式传输 VPC 流日志:

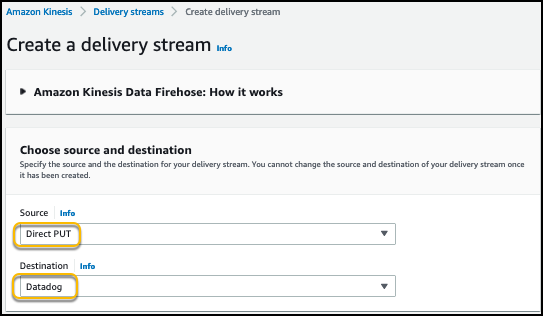

- 在亚马逊 Kinesis 控制台上,在导航窗格中选择 Kinesis Data Fire hose 。

- 选择 创建交付流 。

- 选择 “ 直接 P UT ” 作为来源。

-

将 “

目标

” 设置 为

Datadog

。

-

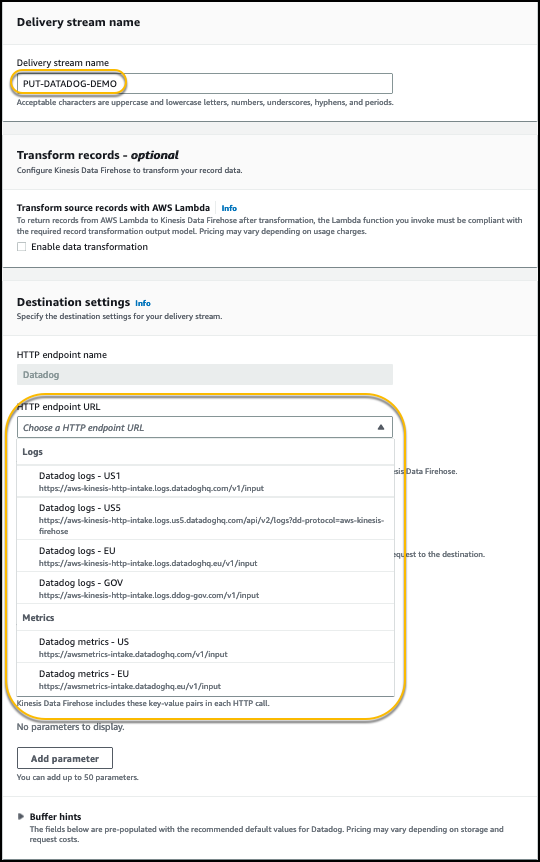

在

交付流名称中

,输入

PUT-DATADOG-DEMO。 - 将 “转换 记录” 下的 “ 数据转 换 ” 设置为 “ 禁用 ” 。

-

在

目标设置

中 ,对于

HTTP 端点 URL

,根据您的区域和 Datadog 帐户配置选择所需日志的 HTTP 终端节点。

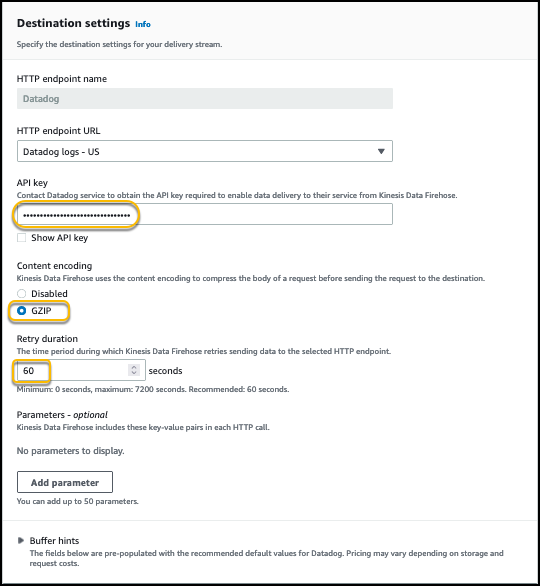

-

对于

API 密钥

,请输入您的

Datadog API 密钥。

这允许您的传输流将 VPC 流日志发布到 Datadog 终端节点。API 密钥是您的组织所独有的。Datadog 代理需要

- 将 内容编码 设置为 GZIP 以减小传输的数据大小。

-

将

重试持续时间

设置 为

60

。如果需要,您可以更改

重试持续时间

值。这取决于 Datadog 端点的请求处理能力。

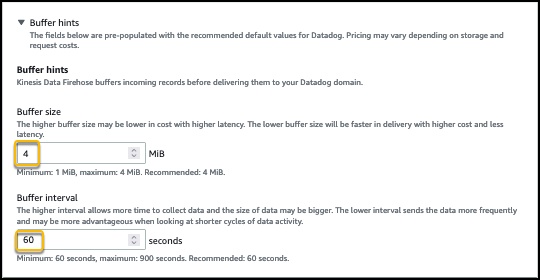

在 缓冲区提示 下 , 缓冲区大小 和 缓冲间隔 设置 为 Datadog 集成的默认值。

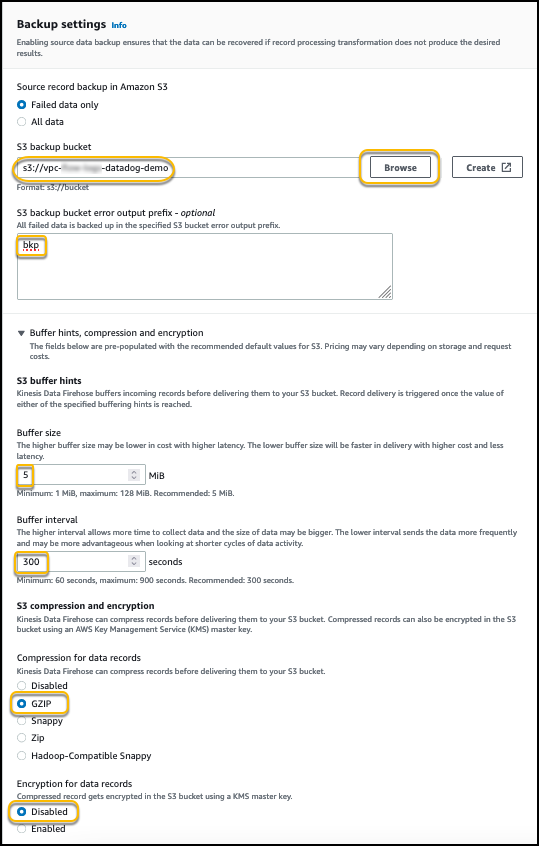

- 如先决条件中所述 ,在 备份设置 下,选择您创建的用于存储失败日志和使用特定前缀进行备份的 S3 存储桶。

- 在 S3 缓冲提示 部分下,将 缓冲区大小 设置 为 5,将 缓冲间隔 设置 为 300。

您可以根据要求更改 S3 缓冲区的大小和间隔。

- 在 S3 压缩和加密 下 , 为数据记录 或您选择的其他 压缩 方法选择 GZIP 压缩。

压缩数据会减少所需的存储空间。

-

为

数据记录的 加密选择 “

禁用

” 。您可以启用数据记录的加密以保护对日志的访问。

-

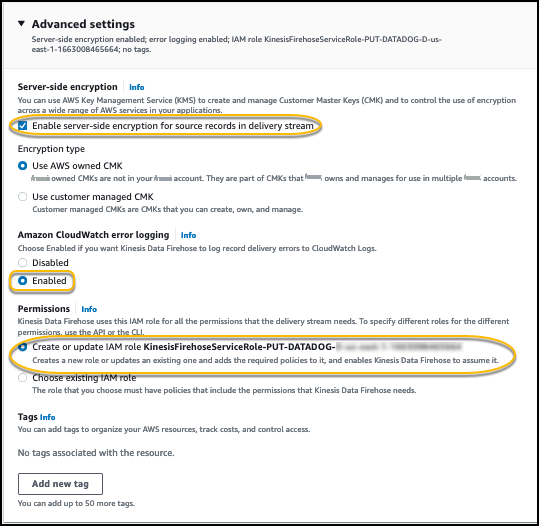

(可选)在 “

高级” 设置

中 ,选择 “

为传输流 中的源记录 启用服务器端加密

”。

您可以使用亚马逊云科技 托管密钥 或由您 管理的CMK 作为加密类型。

- 启用 云观测错误日志 。

-

选择

创建或更新 IAM 角色

,该角色由 Kinesis Data Firehose 作为此直播的一部分创建。

- 选择 “ 下一步 ” 。

- 查看您的设置。

- 选择 创建交付流 。

创建 VPC 流日志订阅

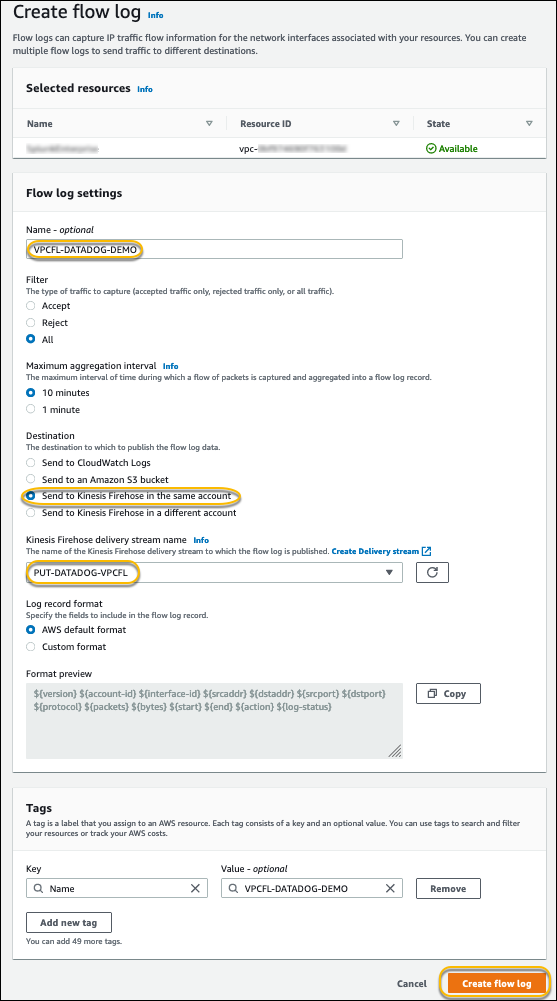

为您在上一步中创建的 Kinesis Data Firehose 传输流创建 VPC 流日志订阅:

- 在 亚马逊 VPC 控制台上,选择 您的 VPC 。

- 选择要为其创建流日志的 VPC。

-

在 “

操作

” 菜单上,选择 “

创建流日志

” 。

- 选择 “ 全部 ” 将所有流日志记录发送到 Firehose 目的地。

如果要筛选流日志,也可以选择 “ 接受 ” 或 “ 拒绝 ” 。

- 如果您需要流日志数据可用于在 Datadog 中进行近乎实时的分析,请在 “ 最大聚合间隔 ” 中选择 10 分钟 或最小设置 1 分钟 。

- 对于 目标 , 如果传输流设置 在您创建 VPC 流日志的同一个账户上,请选择使用同一个账户 发送到 Kinesis Data Firehos e。

如果您想将数据发送到其他账户,请参阅将

- 为 日志记录格式选择一个选项:

-

如果您将

日志记录格式

保留 为

亚马逊云科技 的默认格式

,则流日志将以

版本 2 格式 发送 。 - 或者,您可以为要捕获的流日志指定自定义字段并将其发送到 Datadog。

有关日志格式和可用字段的更多信息,请参阅

-

选择

创建流日志。

现在,让我们来探索 Datadog 中的 VPC 流日志。

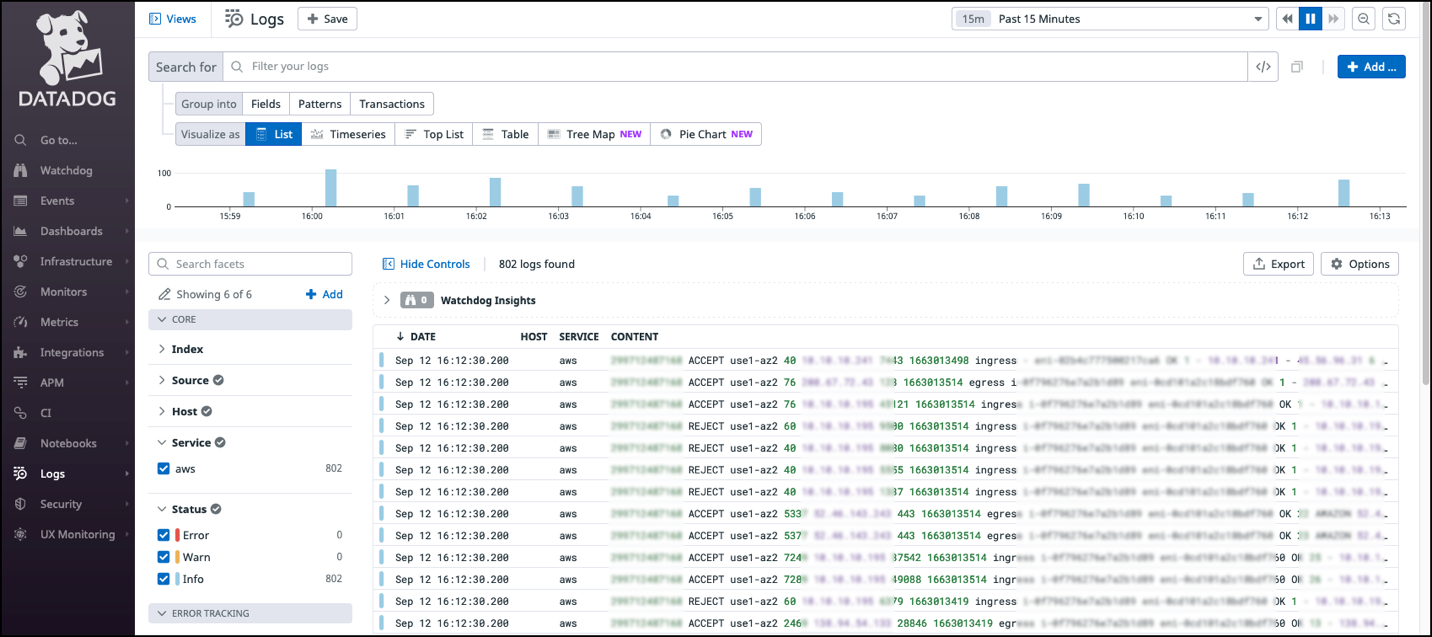

在 Datadog 控制面板中可视化 VPC 流日志

在导航窗格的

日志搜索选项

中,筛选到

来源:

vpc。来自您的 VPC 的 VPC 流日志位于 Datadog Log Explorer 中,并会自动解析,因此您可以按来源、目标、操作或其他属性分析日志。

清理

测试此解决方案后,删除您创建的所有资源,以避免将来产生费用。有关删除资源的说明,请参阅以下链接:

-

IAM 角色 -

IAM 政策 -

VPC 流日志订阅 -

Kinesis Data Firehose 交付流 和相关的 IAM 角色和策略 -

用于 VPC 流日志备份和失败日志的

S3 存储桶 -

资源和

VPC (如果您在 VPC 中创建了新 VPC 和新资源)

结论

在这篇文章中,我们介绍了如何将 VPC 流日志与 Kinesis Data Firehose 交付流集成、无需代码将其传送到 Datadog 目的地,以及如何在 Datadog 仪表板中将其可视化的解决方案。使用 Datadog,您可以轻松浏览和分析日志,以更深入地了解您的应用程序和 亚马逊云科技 基础设施的状态。

尝试使用这种全新、快速、轻松的方式,使用 Kinesis Data Firehose 将您的 VPC 流日志发送到 Datadog 目的地。

作者简介

Chaitanya Shah

是 亚马逊云科技 的高级技术客户经理 (TAM),总部设在纽约。他在与企业客户合作方面拥有超过 22 年的经验。他热爱编程,并积极为 亚马逊云科技 解决方案实验室做出贡献,帮助客户解决复杂的问题。他为 亚马逊云科技 客户提供有关 亚马逊云科技 云迁移最佳实践的指导。他还专门研究 亚马逊云科技 数据传输以及数据和分析领域。

Chaitanya Shah

是 亚马逊云科技 的高级技术客户经理 (TAM),总部设在纽约。他在与企业客户合作方面拥有超过 22 年的经验。他热爱编程,并积极为 亚马逊云科技 解决方案实验室做出贡献,帮助客户解决复杂的问题。他为 亚马逊云科技 客户提供有关 亚马逊云科技 云迁移最佳实践的指导。他还专门研究 亚马逊云科技 数据传输以及数据和分析领域。

*前述特定亚马逊云科技生成式人工智能相关的服务仅在亚马逊云科技海外区域可用,亚马逊云科技中国仅为帮助您发展海外业务和/或了解行业前沿技术选择推荐该服务。