我们使用机器学习技术将英文博客翻译为简体中文。您可以点击导航栏中的“中文(简体)”切换到英文版本。

使用带有 TorchServe 的亚马逊 SageMaker 多模型端点在 GPU 上运行多个生成式 AI 模型,最多可节省 75% 的推理成本

多模型终端节点 (MME) 是 Am

最近,生成式人工智能应用引起了广泛的关注和想象力。客户希望在 GPU 上部署生成式 AI 模型,但同时要意识到成本。SageMaker MME 支持 GPU 实例,是此类应用程序的绝佳选择。今天,我们很高兴地宣布 TorchServe 支持 SageMaker MME。这种新模式的服务器支持使您可以享受 MME 的所有优势,同时仍可使用 TorchServe 客户最熟悉的服务堆栈。在这篇文章中,我们将演示如何使用 TorchServe 在 SageMaker MME 上托管生成式 AI 模型,例如稳定扩散和分段任何模型,以及如何构建语言指导编辑解决方案,帮助艺术家和内容创作者更快地开发和迭代他们的作品。

解决方案概述

语言引导编辑是一种常见的跨行业生成式 AI 用例。它可以通过自动执行重复任务、优化活动以及为终端客户提供超个性化的体验,帮助艺术家和内容创作者更高效地工作以满足内容需求。企业可以从增加内容产出、节省成本、改进个性化和增强的客户体验中受益。在这篇文章中,我们将演示如何使用 MME TorchServe 构建语言辅助编辑功能,允许您从图像中删除任何不需要的对象,并通过提供文本指令来修改或替换图像中的任何对象。

每个用例的用户体验流程如下:

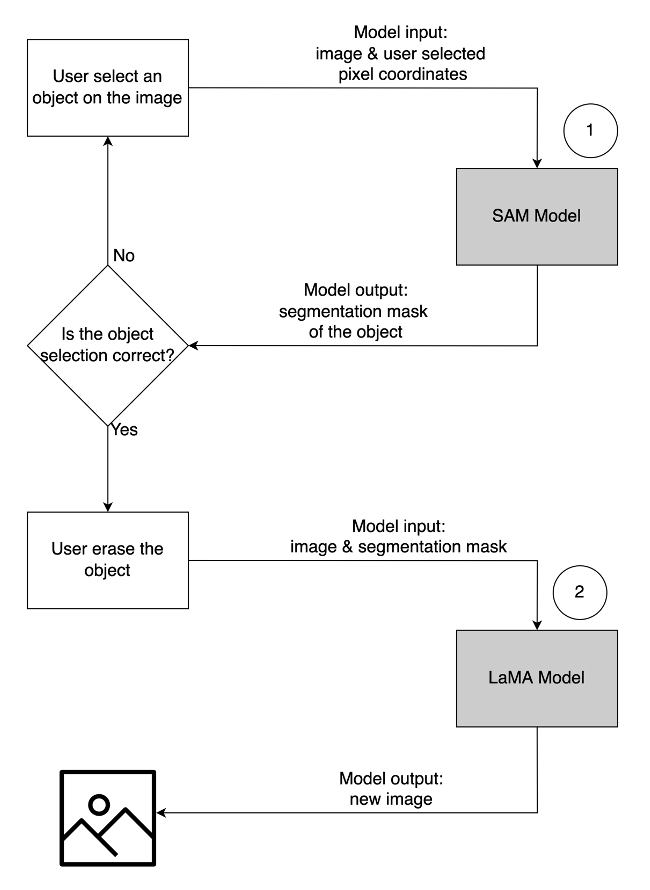

- 要移除不需要的对象,请从图像中选择该对象以将其突出显示。此操作将像素坐标和原始图像发送到生成式 AI 模型,该模型为物体生成分割掩码。确认正确的物体选择后,您可以将原始图像和蒙版图像发送到第二个模型进行移除。该用户流程的详细说明如下所示。

|

|

|

|

步骤 1: 从图像 中选择一个对象(“狗”) |

Step 2: Confirm the correct object is highlighted |

步骤 3: 从图像中 擦除对象 |

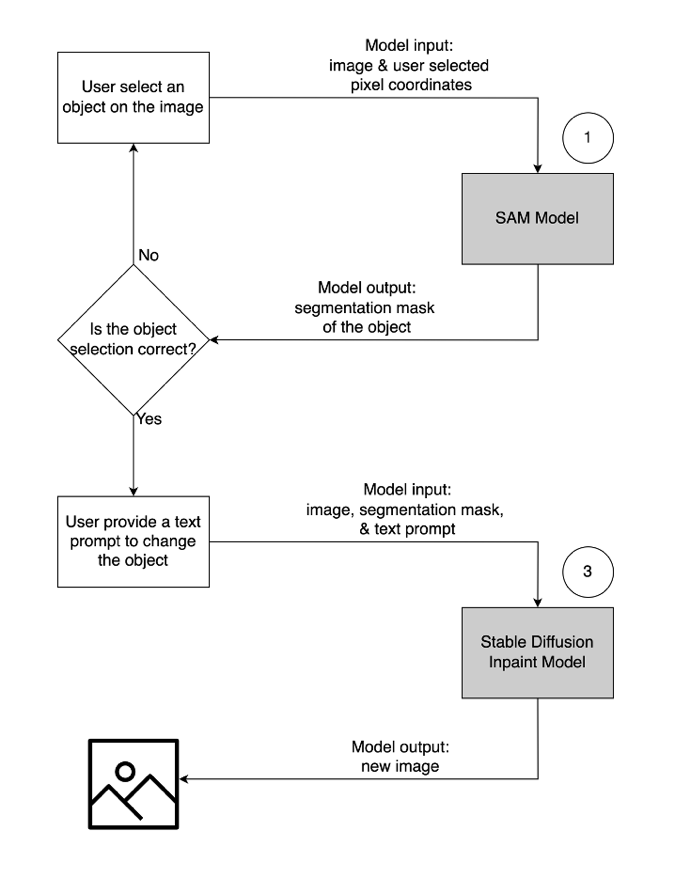

- 要修改或替换对象,请按照与上述相同的过程选择并突出显示所需的对象。确认正确的对象选择后,您可以通过提供原始图像、蒙版和文本提示来修改对象。然后,模型将根据提供的说明更改突出显示的对象。第二个用户流的详细说明如下所示。

|

|

|

|

步骤 1: 从图像 中选择一个物体(“花瓶”) |

Step 2: Confirm the correct object is highlighted |

第 3 步: 提供修改对象的文字提示(“未来派花瓶”) |

为了支持该解决方案,我们使用了三种生成式 AI 模型:分段任意模型 (SAM)、大型蒙版修复模型 (LaMa) 和稳定扩散修复模型 (SD)。以下是在用户体验工作流程中使用这些模型的方式:

| To remove an unwanted object | To modify or replace an object |

|

|

-

分段任意模型 (SAM) 用于生成感兴趣对象的分段掩码。SAM 由 Meta Research 开发,是一种开源模型,可以对图像中的任何物体进行分割。该模型已在名为 SA-1B 的庞大数据集上进行了训练,该数据集包含超过 1100 万张图像和 11 亿个分割掩码。有关SAM的更多信息,请参阅他们的

网站 和研究论文 。 -

LaMa 用于从图像中移除任何不需要的对象。LaMa 是一种生成式对抗网络 (GAN) 模型,专门使用不规则掩码填充图像中缺失的部分。该模型架构结合了全图像全局环境和使用傅立叶卷积的单步架构,使其能够以更快的速度获得最先进的结果。有关LaMa的更多详细信息,请访问他们的

网站 和研究论文 。 -

来自 Stability AI 的 SD 2 inpaint 模型用于修改或替换图像中的对象。该模型允许我们通过提供文本提示来编辑遮罩区域中的对象。inpaint 模型基于文本到图像 SD 模型,该模型可以通过简单的文本提示创建高质量的图像。它提供了其他参数,例如原始图像和掩码图像,允许快速修改和恢复现有内容。要了解有关 亚马逊云科技 上稳定扩散模型的更多信息,请参阅使用

稳定扩散模型 创建高质量映像,并使用 Amazon SageMaker 以经济高效的方式进行部署。

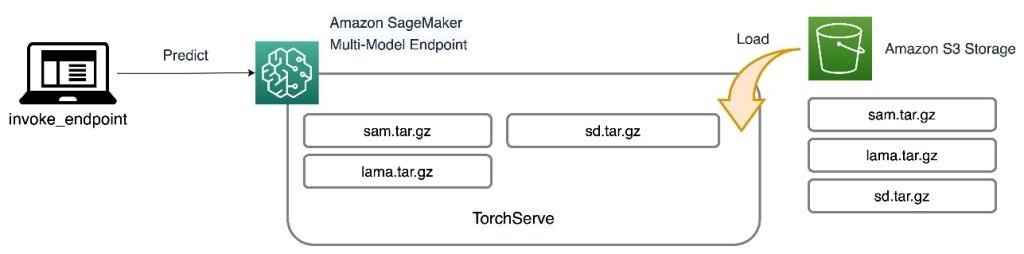

这三种模型都托管在 SageMaker MME 上,这减轻了管理多个端点的运营负担。除此之外,使用MME可以消除人们对某些模型因资源共享而未得到充分利用的担忧。您可以看到提高实例饱和度的好处,这最终可以节省成本。以下架构图说明了如何使用带有 TorchServe 的 SageMaker MME 为所有三种模型提供服务。

我们已经在

a_python3 (Pyth

on 3.10.10) 内核在 SageMaker 笔记本电脑实例上运行此示例。

扩展 TorchServe 容器

第一步是准备模型托管容器。SageMaker 提供了托管的 PyTorch 深度学习容器 (DLC),您可以使用以下代码片段检索该容器:

由于模型需要基本 PyTorch DLC 中不包含的资源和其他软件包,因此您需要构建 Docker 镜像。然后,该图像被上传到

运行 shell 命令文件在本地构建自定义映像并将其推送到 Amazon ECR:

准备模型工件

支持 TorchServe 的新 MME 的主要区别在于你如何准备模型工件。代码库为每个模型(模型文件夹)提供了一个框架文件夹,用于存放 TorchServe 所需的文件。我们遵循相同的四步流程来准备每个模型

.tar 文件。

以下代码是 SD 模型的框架文件夹的示例:

第一步是将预训练的模型检查点下载到模型文件夹中:

下一步是定义一个

custom_handler.py

文件。这是定义模型在收到请求时的行为所必需的,例如加载模型、预处理输入和后处理输出。

句柄

方法是请求的主入口点,它接受请求对象并返回响应对象。它加载预训练的模型检查点,并将

预处理 和

方法应用于输入和输出数据。以下代码片段说明了

后处理

custom_handler.py

文件的简单结构。有关更多详细信息,请参阅

TorchServe 最后一个必需的文件是 model-config.yaml。

该文件定义了模型服务器的配置,例如工作人员数量和批次大小。配置为每个型号的级别,以下代码中显示了示例配置文件。有关参数的完整列表,请参阅

最后一步是使用 torch-model-archiver 模块将所有模型工件打包到一个.tar.gz 文件中:

创建多模型端点

创建 SageMaker MME 的步骤与以前相同。在此特定示例中,您使用 SageMaker SDK 启动端点。首先定义

然后,你要定义一个

MulitDataModel

来捕获模型位置、托管容器和权限访问等所有属性:

d

eploy ()

函数创建端点配置并托管端点:

在我们提供的示例中,我们还展示了如何使用 SDK 列出模型并动态添加新模型。

add_model ()

函数将您的本地模型

.tar

文件复制到 MME S3 位置:

调用模型

现在我们将所有三个模型都托管在 MME 上,我们可以按顺序调用每个模型来构建我们的语言辅助编辑功能。要调用每个模型,请在 pred

ictor.

predict () 函数中提供

target_model

参数。 模型名称只是我们上传的模型

.tar

文件的名称。以下是 SAM 模型的示例代码片段,该模型采用像素坐标、点标签和扩张内核大小,并在像素位置生成对象的分段掩码:

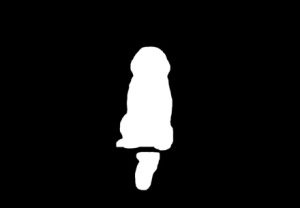

要从图像中移除不需要的对象,请使用由 SAM 生成的分割掩码并将其与原始图像一起输入到 LaMa 模型中。下图显示了一个示例。

|

|

|

|

示例图片 |

Segmentation mask from SAM |

用 LaMa 抹掉那只狗 |

要使用文本提示修改或替换图像中的任何对象,请从 SAM 中提取分割掩码,然后使用原始图像和文本提示将其输入到 SD 模型中,如以下示例所示。

|

|

|

|

示例图片 |

Segmentation mask from SAM |

使用文本提示替换使用 SD 模型 “长凳上的仓鼠” |

节省成本

SageMaker MME 的好处会随着模型整合的规模而增加。下表显示了这篇文章中三种型号的 GPU 内存使用情况。它们使用一个

SageMaker MME 部署在一个 g5.2xl

arge 实例上。

| Model | GPU Memory (MiB) |

| Segment Anything Model | 3,362 |

| Stable Diffusion In Paint | 3,910 |

| Lama | 852 |

使用一个端点托管三个模型时,可以节省成本,而对于具有成百上千个模型的用例,节省的费用要大得多。

例如,考虑 100 个稳定扩散模型。每个模型单独可以由一个

ml.g5.2xlarge 端点(4 G

iB 内存)提供服务,在美国东部(弗吉尼亚北部)地区,每实例小时的费用为 1.52 美元。使用自己的端点提供所有100个型号每月将花费218,880美元。使用 SageMaker MME,使用 ml

.g5.2xlarg

e 实例的单个端点可以同时托管四个模型。这使生产推断成本降低了75%,至每月仅为54,720美元。下表总结了本示例的单模型端点和多模型端点之间的差异。假定终端节点配置为目标模型提供足够的内存,则加载所有模型后的稳定状态调用延迟将与单模型端点的延迟类似。

| . | Single-model endpoint | Multi-model endpoint |

| Total endpoint price per month | $218,880 | $54,720 |

| Endpoint instance type | ml.g5.2xlarge | ml.g5.2xlarge |

| CPU Memory capacity (GiB) | 32 | 32 |

| GPU Memory capacity (GiB) | 24 | 24 |

| Endpoint price per hour | $1.52 | $1.52 |

| Number of instances per endpoint | 2 | 2 |

| Endpoints needed for 100 models | 100 | 25 |

清理

完成后,请按照笔记本清理部分的说明删除本文中提供的资源,以避免不必要的费用。有关推理

结论

这篇文章演示了通过使用带有 TorchServe 的 SageMaker MME 上托管的生成式 AI 模型而实现的语言辅助编辑功能。我们分享的示例说明了如何在 SageMaker MME 中使用资源共享和简化模型管理,同时仍使用 TorchServe 作为我们的模型服务堆栈。我们使用了三种深度学习基础模型:SAM、SD 2 Inpainting 和 LaMA。这些模型使我们能够构建强大的功能,例如从图像中擦除任何不需要的对象,以及通过提供文本指令来修改或替换图像中的任何对象。这些功能可以通过自动执行重复任务、优化活动和提供超个性化的体验,帮助艺术家和内容创作者更高效地工作并满足他们的内容需求。我们邀请您浏览本文中提供的示例,并在 SageMaker MME 上使用 TorchServe 打造自己的用户界面体验。

首先,请参阅

作者简介

James Wu

是 亚马逊云科技 的高级人工智能/机器学习专家解决方案架构师,帮助客户设计和构建 AI/ML 解决方案。James 的工作涵盖了广泛的机器学习用例,主要兴趣是计算机视觉、深度学习和在整个企业中扩展机器学习。在加入 亚马逊云科技 之前,James 曾担任架构师、开发人员和技术负责人超过 10 年,其中 6 年从事工程工作,4 年在营销和广告行业工作。

James Wu

是 亚马逊云科技 的高级人工智能/机器学习专家解决方案架构师,帮助客户设计和构建 AI/ML 解决方案。James 的工作涵盖了广泛的机器学习用例,主要兴趣是计算机视觉、深度学习和在整个企业中扩展机器学习。在加入 亚马逊云科技 之前,James 曾担任架构师、开发人员和技术负责人超过 10 年,其中 6 年从事工程工作,4 年在营销和广告行业工作。

李宁

是 亚马逊云科技 的高级软件工程师,专长于构建大规模 AI 解决方案。作为亚马逊云科技和Meta共同开发的项目TorchServe的技术主管,她的激情在于利用PyTorch和亚马逊云科技 SageMaker帮助客户拥抱人工智能以实现更大的利益。在她的职业生涯之外,Li 喜欢游泳、旅行、关注最新的科技进步以及与家人共度美好时光。

李宁

是 亚马逊云科技 的高级软件工程师,专长于构建大规模 AI 解决方案。作为亚马逊云科技和Meta共同开发的项目TorchServe的技术主管,她的激情在于利用PyTorch和亚马逊云科技 SageMaker帮助客户拥抱人工智能以实现更大的利益。在她的职业生涯之外,Li 喜欢游泳、旅行、关注最新的科技进步以及与家人共度美好时光。

Ankith Gunapal

是 Meta(PyTorch)的人工智能合作伙伴工程师。他热衷于模型优化和模型服务,拥有从 RTL 验证、嵌入式软件、计算机视觉到 PyTorch 等方面的经验。他拥有数据科学硕士学位和电信硕士学位。工作之余,Ankith 还是电子舞曲制作人。

Ankith Gunapal

是 Meta(PyTorch)的人工智能合作伙伴工程师。他热衷于模型优化和模型服务,拥有从 RTL 验证、嵌入式软件、计算机视觉到 PyTorch 等方面的经验。他拥有数据科学硕士学位和电信硕士学位。工作之余,Ankith 还是电子舞曲制作人。

索拉布·特里坎德

是亚马逊 SageMaker Inference 的高级产品经理。他热衷于与客户合作,并以实现机器学习民主化的目标为动力。他专注于与部署复杂的机器学习应用程序、多租户机器学习模型、成本优化以及使深度学习模型更易于部署相关的核心挑战。在业余时间,索拉布喜欢徒步旅行、学习创新技术、关注 TechCrunch 以及与家人共度时光。

索拉布·特里坎德

是亚马逊 SageMaker Inference 的高级产品经理。他热衷于与客户合作,并以实现机器学习民主化的目标为动力。他专注于与部署复杂的机器学习应用程序、多租户机器学习模型、成本优化以及使深度学习模型更易于部署相关的核心挑战。在业余时间,索拉布喜欢徒步旅行、学习创新技术、关注 TechCrunch 以及与家人共度时光。

Subhash Talluri

是亚马逊网络服务电信行业业务部门的首席人工智能/机器学习解决方案架构师。他一直领导为全球电信客户和合作伙伴开发创新的人工智能/机器学习解决方案。他带来了工程和计算机科学领域的跨学科专业知识,通过 亚马逊云科技 上的云优化架构帮助构建可扩展、安全和合规的 AI/ML 解决方案。

Subhash Talluri

是亚马逊网络服务电信行业业务部门的首席人工智能/机器学习解决方案架构师。他一直领导为全球电信客户和合作伙伴开发创新的人工智能/机器学习解决方案。他带来了工程和计算机科学领域的跨学科专业知识,通过 亚马逊云科技 上的云优化架构帮助构建可扩展、安全和合规的 AI/ML 解决方案。

*前述特定亚马逊云科技生成式人工智能相关的服务仅在亚马逊云科技海外区域可用,亚马逊云科技中国仅为帮助您发展海外业务和/或了解行业前沿技术选择推荐该服务。