我们使用机器学习技术将英文博客翻译为简体中文。您可以点击导航栏中的“中文(简体)”切换到英文版本。

Twilio 如何使用亚马逊 DynamoDB 实现飞行后消息服务数据存储的现代化

Twilio 支持电话、短信、在线聊天和电子邮件的全渠道。在客户旅程的每个阶段,Twilio Messaging 都会在他们的首选应用程序上为客户提供支持。Twilio 可以通过可编程的消息 API 主动向客户通知账户活动、购买确认和发货通知。

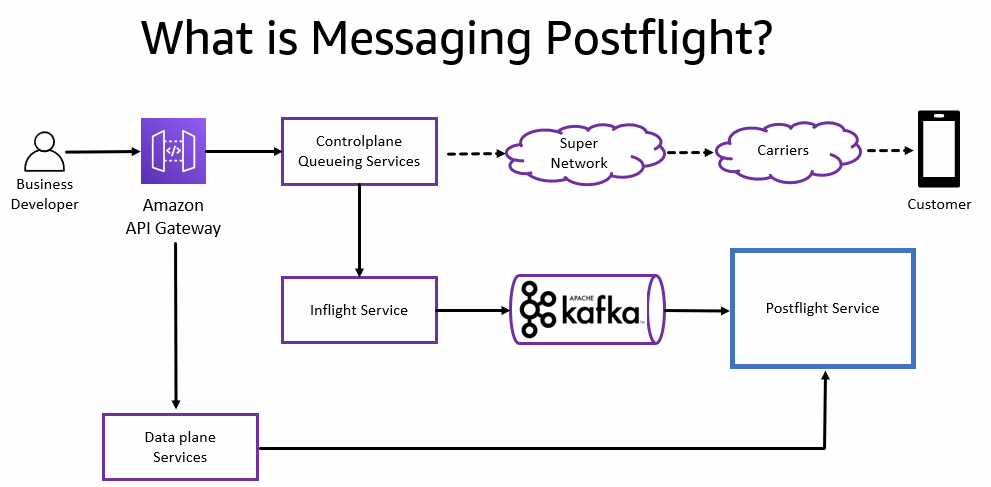

消息的 Twilio Messaging 数据管道生命周期分为两种状态:

- 飞行 中 -在消息的整个生命周期中,事件必须经过各种机上状态(例如排队、已发送或已送达),直到完成。由于 Twilio Messaging 服务的异步性质,飞行状态必须在系统的某个位置进行管理和保存。

- Postfli ght — 达到飞行后状态后,必须将消息记录保存到长期存储中,以供日后检索和分析处理。

从历史上看,无论消息处于生命周期的哪个阶段,所有 Twilio Messaging 数据都存储在同一个数据存储中。

Twilio Messaging Postflight 是 Twilio 的后端系统,它为 Twilio 处理的所有消息流量(短信、彩信和 OTT,例如 WhatsApp)的数据平面提供支持。它允许用户和内部系统通过 Postflight API 查询、更新和删除消息日志数据。该服务负责最终消息的长期存储和检索、不同的查询模式、隐私和自定义数据保留。

在这篇文章中,我们将讨论 Twilio 如何使用

这种现代化消除了 Twilio 在其传统架构中遇到的运营复杂性和过度配置挑战。现代化之后,Twilio 看到处理延迟事件从大约每月一起减少到零。使用传统的 MySQL 数据存储,Twilio 在 2020 年黑色星期五和网络星期一的高峰期经历了 10-19 小时的延迟。有了 DynamoDB,Twilio 在 2021 年的同一个高峰期内出现了零延迟。Twilio现在已经停用了整个90节点的MySQL数据库,从而每年节省了250万美元的成本。

传统架构概述

Postflight 数据存储可处理与顶级 Twilio 账户相关的数百亿条记录的访问模式。传统架构每天处理 4.5 亿条消息,有 260 TB 的存储空间,峰值时有 40,000 次写入 RPS,峰值时有 12,000 次读取 RPS。

Twilio Messaging Postflight 一直在使用分片 MySQL 集群架构来处理和存储 260 TB 的当前和历史消息日志数据。尽管该架构在理论上是可扩展的,但从实际的角度来看,它还不够灵活,无法无缝适应客户流量的激增。

传统架构存在许多问题,尤其是在规模方面。历史挑战包括管理 MySQL 90 节点集群的操作管理复杂性、流量高峰期间的高写入争用、数据延迟、非高峰时段的容量过度配置,以及噪音邻居问题,在这些问题中,大量的客户流量峰值导致写入争用并降低 MySQL 数据库的可用性。

传统数据存储具有以下属性和要求:

- 写入一个 MySQL 表,每条记录小于 1 KB。数据从 Kafka 消息总线提取到 MySQL 数据库中。

- MySQL 数据库的区域化涉及在新区域中创建新集群。

- 每秒写入一千到几万次,这也很尖锐。

- 数据在数据库中保留几个月。

- 至少 100 万活跃客户。

- 预计客户将在几分钟内访问数据。

- 85% 的写入流量和 15% 的读取流量。

- MySQL 集群由一个主集群和每个分片的三个副本组成。

- 基于账户的分片。

-

MySQL 集群使用多个

亚马逊弹性计算云 (亚马逊 EC2)i3en 集群。 - MySQL 数据库目前包含大约 1000 亿条记录。

Twilio Messaging 数据管道涉及写入 Apache Kafka 主题的消息。中间服务转换消息,另一个服务将消息提取到 MySQL 数据库中。

下图说明了传统架构。

Twilio 在扩展和维护其传统的自行管理 MySQL 数据存储方面面临以下挑战:

- 分片 MySQL 设计由多个在 Amazon EC2 上运行的自管理集群组成。

- 基于账户的分片对来自大型客户(尤其是吵闹的邻居)的流量激增很敏感。

- 考虑到分片拆分对实时流量的影响,分片拆分既耗时又危险。

- 客户流量激增需要限制整个分片的处理速度,以防止数据库出现故障。这可能会导致分片上所有客户的数据延迟、支持票证的增加以及客户失去信任。

- 为峰值负载配置数据库集群效率低下,因为大多数时候容量的使用量很少。

在以下部分中,我们将描述导致选择 DynamoDB 作为存储层的设计注意事项,以及构建、扩展数据处理管道并将其迁移到 DynamoDB 所涉及的步骤。

为了帮助确定合适的专用的 亚马逊云科技 数据库,AW

解决方案概述

Twilio Messaging 之所以选择 DynamoDB,原因如下:

- Postflight 查询模式可以转换为有序键值存储中的范围查询。

- DynamoDB 支持符合 Twilio 数百毫秒要求的低延迟查询和范围查询。

-

DynamoDB 提供可预测的性能和无缝的可扩展性。虽然单个 DynamoDB 分区可以提供 3,000 个读取容量单位 (RCU) 和 1,000 个写入容量单位 (WCU),但通过良好的分区键设计,通过水平扩展,最大吞吐量实际上是无限的。要了解更多信息,请参阅

扩展 DynamoDB:分区、热键和分割如何影响性能(第 1 部分:加载) 。 -

DynamoDB 具有

自动扩展容量功能 ,可以根据用户流量动态调整预配置的吞吐容量,因此 Twilio 不必为与传统 MySQL 数据库相关的峰值工作负载进行过度配置。 - 与自行管理的 MySQL 相比,DynamoDB 是无服务器的,没有操作复杂性。使用 DynamoDB,您不必担心硬件配置、设置和配置、复制、软件补丁或集群扩展。

为了确保 DynamoDB 能够满足 Twilio 作为传统 MySQL 集群替代数据库的要求,必须满足某些成功标准:

- 确保基表的写入流量可以扩展到每秒 100,000 个请求 (RPS),延迟以几十毫秒为单位

- 确保范围查询能够以合理的延迟提供 1,000 RPS

- 确保从基表向索引进行写入的复制延迟不超过 1 秒

Twilio Messaging 的首席架构师在 亚马逊云科技 专业服务的帮助下进行了负载测试,以确保 DynamoDB 满足 Twilio 的性能和延迟要求。亚马逊云科技 专业服务使用性能工具

亚马逊云科技 专业服务审查了源自传统 MySQL 数据结构的 DynamoDB 数据模型(架构设计),以确保它可以在不

分区键设计包括通过范围查找和合成分区

亚马逊云科技 专业服务通过负载测试证实,DynamoDB 可以扩展以满足 Twilio 的要求。亚马逊云科技 和 Twilio 审查了负载测试结果并确认结果符合 Twilio 的成功标准。

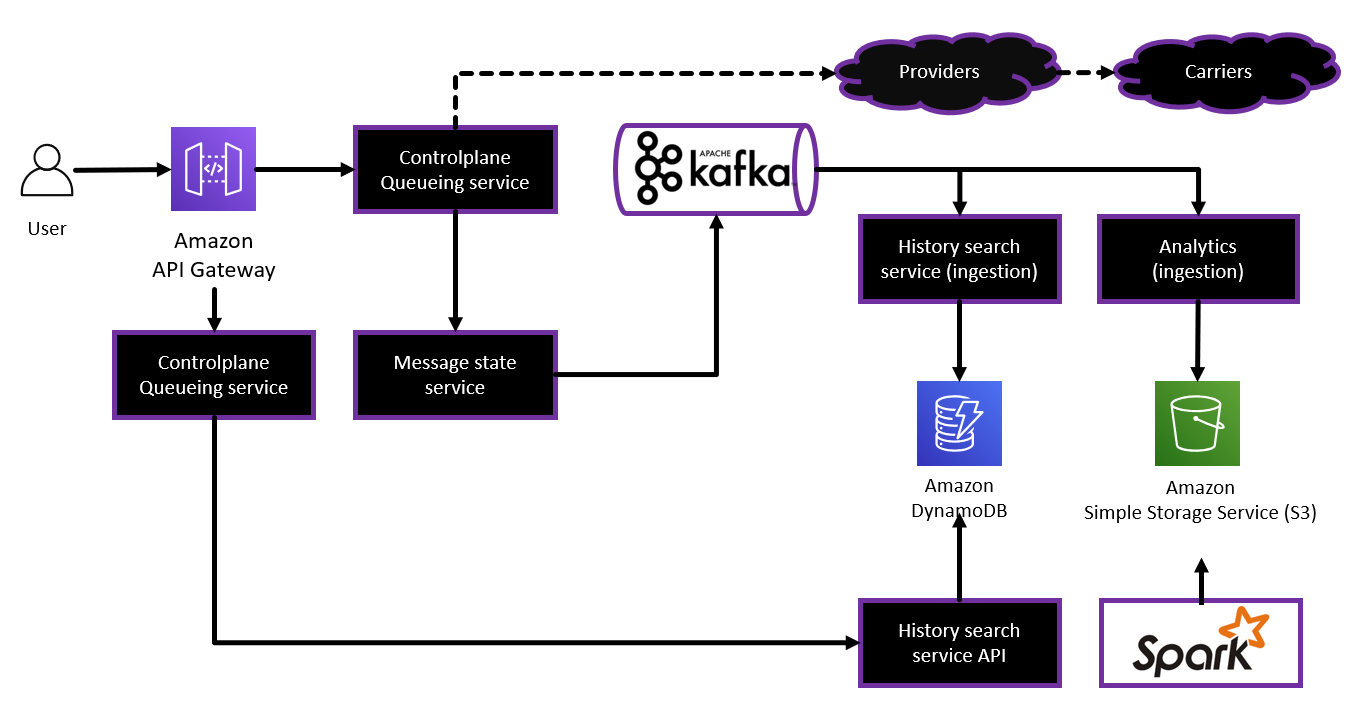

下图显示了 Twilio Messaging Postflight 在装有 DynamoDB 的 EC2 i3en 实例上运行的多节点、自行管理 MySQL 数据存储的替代解决方案。

结论

在这篇文章中,您了解了 Twilio 如何使用 DynamoDB 对其 90 节点 MySQL 集群进行现代化改造,并将其数据处理延迟降至零,从而消除了操作复杂性和过度配置挑战并显著降低了成本。

使用

作者简介

ND Ngoka 是 A

WS 的高级解决方案架构师。他在存储领域拥有超过18年的经验。他帮助 亚马逊云科技 客户在云中构建具有弹性和可扩展性的解决方案。ND 喜欢园艺、徒步旅行和在有机会时探索新地方。

ND Ngoka 是 A

WS 的高级解决方案架构师。他在存储领域拥有超过18年的经验。他帮助 亚马逊云科技 客户在云中构建具有弹性和可扩展性的解决方案。ND 喜欢园艺、徒步旅行和在有机会时探索新地方。

Nikolai Kolesniko

v 是一名首席数据架构师,在数据工程、架构和构建分布式应用程序方面拥有 20 多年的经验。他使用亚马逊 DynamoDB 和密钥空间帮助 亚马逊云科技 客户构建高度可扩展的应用程序。他还领导亚马逊 Keyspaces ProServe 迁移项目。

Nikolai Kolesniko

v 是一名首席数据架构师,在数据工程、架构和构建分布式应用程序方面拥有 20 多年的经验。他使用亚马逊 DynamoDB 和密钥空间帮助 亚马逊云科技 客户构建高度可扩展的应用程序。他还领导亚马逊 Keyspaces ProServe 迁移项目。

Greg Krumm

是 亚马逊云科技 的资深南非 DynamoDB 专家。他帮助 亚马逊云科技 客户在 Amazon DynamoDB 上架构高度可用、可扩展和高性能的系统。在业余时间,格雷格喜欢与家人共度时光,在俄勒冈州波特兰周围的森林里徒步旅行。

Greg Krumm

是 亚马逊云科技 的资深南非 DynamoDB 专家。他帮助 亚马逊云科技 客户在 Amazon DynamoDB 上架构高度可用、可扩展和高性能的系统。在业余时间,格雷格喜欢与家人共度时光,在俄勒冈州波特兰周围的森林里徒步旅行。

Vijay Bhat

是 Twilio 的首席软件工程师,致力于为通信平台提供支持的核心数据后端系统。他在为机器学习和分析应用程序大规模构建高性能数据系统方面拥有深厚的行业经验。在加入Twilio之前,他曾领导团队在Lyft建立营销自动化和激励计划系统,还领导了Facebook、Intuit和Capital One的咨询业务。工作之余,他喜欢和两个年幼的女儿一起演奏、旅行和弹吉他。

Vijay Bhat

是 Twilio 的首席软件工程师,致力于为通信平台提供支持的核心数据后端系统。他在为机器学习和分析应用程序大规模构建高性能数据系统方面拥有深厚的行业经验。在加入Twilio之前,他曾领导团队在Lyft建立营销自动化和激励计划系统,还领导了Facebook、Intuit和Capital One的咨询业务。工作之余,他喜欢和两个年幼的女儿一起演奏、旅行和弹吉他。

*前述特定亚马逊云科技生成式人工智能相关的服务仅在亚马逊云科技海外区域可用,亚马逊云科技中国仅为帮助您发展海外业务和/或了解行业前沿技术选择推荐该服务。