亚马逊云科技 数据库迁移服务

(亚马逊云科技 DMS) 是一项完全托管的服务,可帮助您快速安全地将数据库迁移到 亚马逊云科技。每个客户的用例都是独一无二的;您不仅可以将 亚马逊云科技 DMS 用作一次性数据迁移解决方案,还可以根据下游应用程序的要求复制数据。

在这篇文章中,我们演示了两个选项,用于配置 亚马逊云科技 DMS,以便在将数据复制到目标数据库时筛选删除内容。第一种策略使用

Amazon Simple Storage Servic

e (Amazon S3),第二种策略使用 亚马逊云科技 DMS 转换规则来标记复制期间的删除操作。此配置允许您有选择地将已删除的数据保留在目标数据库中以用于审计目的,即使在源数据库进行定期清理操作时也是如此。

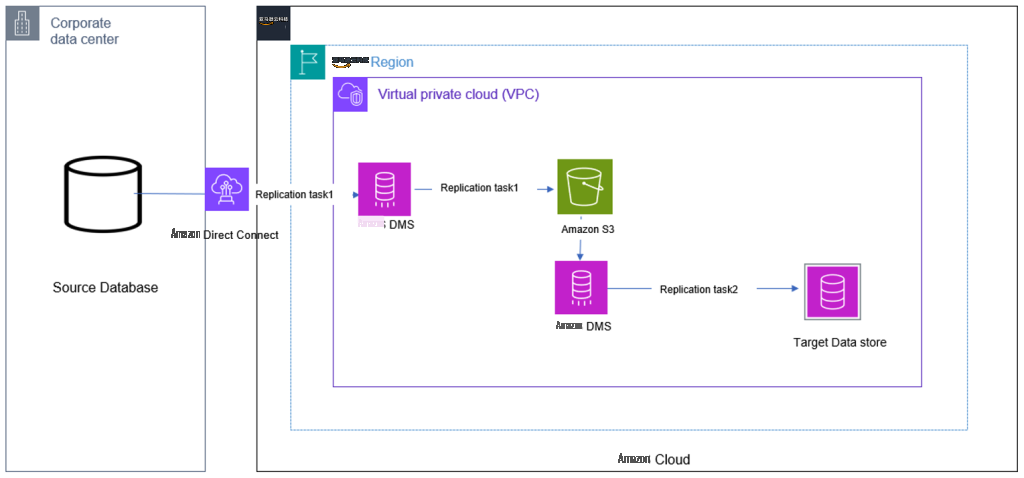

使用 Amazon S3 作为暂存环境

使用 亚马逊云科技 DMS 筛选删除内容的一种方法是使用 Amazon S3 作为暂存环境。亚马逊云科技 DMS 会将插入和更新迁移并忽略从源数据库删除到 S3 存储桶的操作。您可以将此方法用于 亚马逊云科技 DMS 中任何支持的

源

或

目标

终端节点。开始迁移之前,请查看所选端点的限制。

如下图所示,Amazon S3 充当中间系统,使用 Amazon S3 作为目标提供的

CDCinsertsandUpdat

es 终端节点设置,将删除的内容排除在最终目标之外。

亚马逊云科技 Direct Connect

是一个可选但值得推荐的组件,用于提高安全性。亚马逊云科技 Direct Connect 是一项网络服务,它为使用互联网连接 亚马逊云科技 提供了另一种选择。使用 亚马逊云科技 Direct Connect,以前通过互联网传输的数据将通过您的设施与 亚马逊云科技 之间的私有网络连接进行传输。

当您使用

cdcinsertsandUpdat

es S3 目标端点设置时,会添加一个额外的第一个字段,该字段表示该行是在源数据库中插入 (I) 还是已更新 (U)。

AWS DMS 如何创建和设置此字段取决于

迁移任务类型以及

IncludeOpforFullLoad 、 cdcinsertSonly 和 cdcinsertsandUpdates

的设置

。

如果我们的 亚马逊云科技 DMS 任务同时执行满载和变更数据捕获 (CDC),则我们必须将

includeOpforFullLoad 参数添加到我们的目标 S3

终端节点设置中。亚马逊云科技 DMS 在我们的加载文件中创建了一个附加字段,这可确保加载文件和 CDC 文件中的列保持一致。

如果没有此参数,load 和 CDC 文件的列数将不同,如果您尝试同时使用这两个文件中的数据,则可能会导致以下错误:

[SOURCE_UNLOAD ]E: Failed to write record id: 2, Number of values: 6 is not equal to number of columns: 5. [1020417] (file_unload.c:477)

例如,假设我们的源表中有以下数据:

+----+----------------+------+--------------------+-------------------------------+

| id | name | age | email | address |

+----+----------------+------+--------------------+-------------------------------+

| 1 | John Doe | 30 | john@example.com | 123 Any Street, Any Town, USA |

| 2 | Jane Smith | 25 | jane@example.com | 456 Any Street, Any Town, USA |

| 3 | Carlos Salazar | 40 | carlos@example.com | 789 Any Street, Any Town, USA |

+----+----------------+------+--------------------+-------------------------------+

现在,假设我们对该表执行以下 DML 操作:

-- Update a row

UPDATE my_table SET age = 34 WHERE id = 1;

Query OK, 1 row affected (0.090 sec)

Rows matched: 1 Changed: 1 Warnings: 0

-- Delete a row

DELETE FROM my_table WHERE id = 2;

Query OK, 1 row affected (0.088 sec)

-- Insert another row

INSERT INTO my_table (id, name, age, email, address) VALUES (5, ‘Mary Major', 35, mary@example.com', 231 Main Street, Anytown, USA);

执行这些操作后,我们表中的数据如下所示:

+----+----------------+------+--------------------+-------------------------------+

| id | name | age | email | address |

+----+----------------+------+--------------------+-------------------------------+

| 1 | John Doe | 34 | john@example.com | 123 Any Street, Any Town, USA |

| 3 | Carlos Salazar | 40 | carlos@example.com | 789 Any Street, Any Town, USA |

| 5 | Mary Major | 35 | mary@example.com | 231 Main Street, Anytown, USA |

+----+----------------+------+--------------------+-------------------------------+

以下是使用 cd

cinser

tsandUpdates 参数时 S3 暂存存储桶中的 亚马逊云科技 DMS 输出:

U 1 John Doe 34 john@example.com 123 Main St

I 5 Mary Major 35 mary@example.com 321 Pine St

我们可以观察到,在使用

cdcinsertsandUpd

ates 参数时,只会捕获插入和更新,删除内容会被过滤。这是因为此参数告诉 亚马逊云科技 DMS 仅捕获插入或更新的变更数据,并过滤掉所有已删除的变更数据。

现在,我们使用这个中间 S3 存储桶作为源,并在 Amazon S3 和目标数据库之间创建另一个 亚马逊云科技 DMS 任务。

在将 Amazon S3 配置为源时,我们还需要提供一个带有

外部表定义

的 JSON 文件,以 便 亚马逊云科技 DMS 可以正确复制数据。以下是我们使用的外部表定义示例:

{

"TableCount": "1",

"Tables": [

{

"TableName": "my_table",

"TablePath": "test/my_table",

"TableOwner": "test",

"TableColumns": [

{

"ColumnName": "operation_indicator",

"ColumnType": "STRING",

"ColumnNullable": "false",

"ColumnLength": 10

},

{

"ColumnName": "id",

"ColumnType": "INT8",

"ColumnNullable": "false",

"ColumnIsPk": "true"

},

{

"ColumnName": "name",

"ColumnType": "STRING",

"ColumnLength": "20"

},

{

"ColumnName": "age",

"ColumnType": "INT8"

},

{

"ColumnName": "email",

"ColumnType": "STRING",

"ColumnLength": "100"

},

{

"ColumnName": "address",

"ColumnType": "STRING",

"ColumnLength": "50"

}

],

"TableColumnsTotal": "6"

}

]

}

目标数据库显示未应用删除操作,ID 为 2 的记录仍然存在:

+---------------------+----+----------------+------+--------------------+-------------------------------+

| operation_indicator | id | name | age | email | address |

+---------------------+----+----------------+------+--------------------+-------------------------------+

| U | 1 | John Doe | 34 | john@example.com | 123 Any Street, Any Town, USA |

| I | 2 | Jane Doe | 25 | jane@example.com | 456 Any Street, Any Town, USA |

| I | 3 | Carlos Salazar | 40 | carlos@example.com | 789 Any Street, Any Town, USA |

| I | 5 | Mary Major | 35 | mary@example.com | 231 Any Street, Any Town, USA |

+---------------------+----+----------------+------+--------------------+-------------------------------+

通过使用 Amazon S3 作为过渡环境来筛选 DML 操作,您可以选择性地仅捕获要复制的更改。例如,如果我们只想复制插入内容,则可以使用

cdcin

sertsonly。这有助于我们在目标系统上保留已删除的数据以用于审计目的,即使在源系统中删除了这些记录也是如此。

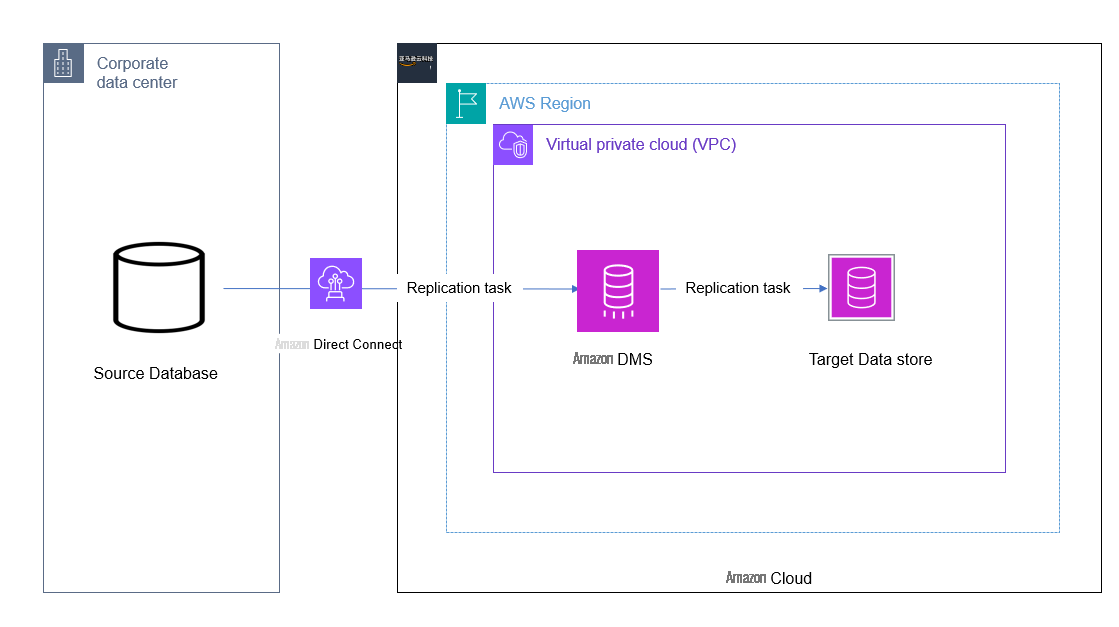

使用 亚马逊云科技 DMS 转换规则

如下图所示,亚马逊云科技 DMS 用于从本地数据中心迁移和复制数据。利用转换规则的内置功能,它可以过滤掉删除操作。

亚马逊云科技 Direct Connect

是一个可选但值得推荐的组件,用于提高安全性。亚马逊云科技 Direct Connect 是一项网络服务,它为使用互联网连接 亚马逊云科技 提供了另一种选择。使用 亚马逊云科技 Direct Connect,以前通过互联网传输的数据将通过您的设施与 亚马逊云科技 之间的私有网络连接进行传输。

使用 op

eration_ind

icator 函数可确保目标记录在目标表的额外列中使用 DML 操作标识符进行标记。要在目标表中添加此额外列,请在 亚马逊云科技 DMS 任务的

表映射

部分 中使用以下

转换规则

。使用此转换规则,根据转换 JSON 规则中表达式参数中指定的值,使用用户提供的值来标记目标表中的记录。使用此功能的先决条件之一是源表和目标表都应具有主键。

{

"rule-type": "transformation",

"rule-id": "2",

"rule-name": "2",

"rule-target": "column",

"object-locator": {

"schema-name": "%",

"table-name": "%"

},

"rule-action": "add-column",

"value": "Operation",

"expression": "operation_indicator('D', 'U', 'I')",

"data-type": {

"type": "string",

"length": 50

}

这允许您在目标系统中保持记录处于活动状态,即使该记录已在源中删除。根据目标系统中先前的转换规则,使用用户提供的 D 值来标记在源位置删除的记录。

为了测试此功能,我们使用了相同的表元数据和文章开头提到的 DML 事务。目标表中包含上述活动的最终输出如下所示:

+----+----------------+------+--------------------+-------------------------------+---------------------+

| id | name | age | email | address | operation_indicator |

+----+----------------+------+--------------------+-------------------------------+---------------------+

| 3 | Carlos Salazar | 40 | carlos@example.com | 789 Any Street, Any Town, USA | |

| 2 | Jane Smith | 25 | jane@example.com | 456 Any Street, Any Town, USA | U |

| 1 | John Doe | 34 | john@example.com | 123 Any Street, Any Town, USA | D |

| 5 | Mary Major | 35 | mary@example.com | 231 Main Street, Anytown, USA | I |

+----+----------------+------+--------------------+-------------------------------+---------------------+

根据转换规则中指定,ID 为 2 的记录在操作列下的值为 D 的记录在目标中仍然完好无损。我们还可以通过运行查询或使用条件运算符 <> 'D 创建视图来查看表内容而不进行删除。

结论

在这篇文章中,我们演示了两种使用 亚马逊云科技 DMS 的内部功能过滤源数据库删除操作的方法。根据目标数据库的数据要求,您可以使用任何一种方法。使用 Amazon S3 作为目标会增加中间数据存储的额外成本,但如果多个消费者需要处理来自 Amazon S3 的数据的不同子集,它可能会很有用。同样,当只有一行源到目标的数据迁移或复制要求时,使用转换规则的 operation

_ind

icator 函数也是一个不错的选择。

查看

数据库迁移——在开始之前你需要知道什么?

开始吧。另请查看与 亚马逊云科技 DMS 和其他

S3 终端节点设置

相关的推荐

最佳实践

,以解决各种用例。

作者简介

Deepthi Saina

是总部位于英国的 亚马逊云科技 的数据架构师。Deepthi 专门研究亚马逊 RDS、Amazon Aurora 和 亚马逊云科技 DMS。她还是 亚马逊云科技 DMS 和亚马逊 RDS 的主题专家。在她的职位上,她帮助整个欧洲、中东和非洲地区的客户在 亚马逊云科技 上迁移、更新和优化数据库解决方案。

Deepthi Saina

是总部位于英国的 亚马逊云科技 的数据架构师。Deepthi 专门研究亚马逊 RDS、Amazon Aurora 和 亚马逊云科技 DMS。她还是 亚马逊云科技 DMS 和亚马逊 RDS 的主题专家。在她的职位上,她帮助整个欧洲、中东和非洲地区的客户在 亚马逊云科技 上迁移、更新和优化数据库解决方案。

Vivekananda Mohapatra 是亚马逊网络

服务 ProServe 团队的首席顾问。他在适用于甲骨文的亚马逊 RDS、适用于 PostgreSQL 的亚马逊 RDS、亚马逊 Aurora PostgreSQL 和亚马逊 Redshift 数据库的数据库开发和管理方面拥有深厚的专业知识。他还是 亚马逊云科技 DMS 的主题专家。他每天与客户紧密合作,帮助他们将数据库和应用程序迁移到 亚马逊云科技 并对其进行现代化改造。

Vivekananda Mohapatra 是亚马逊网络

服务 ProServe 团队的首席顾问。他在适用于甲骨文的亚马逊 RDS、适用于 PostgreSQL 的亚马逊 RDS、亚马逊 Aurora PostgreSQL 和亚马逊 Redshift 数据库的数据库开发和管理方面拥有深厚的专业知识。他还是 亚马逊云科技 DMS 的主题专家。他每天与客户紧密合作,帮助他们将数据库和应用程序迁移到 亚马逊云科技 并对其进行现代化改造。

Deepthi Saina

是总部位于英国的 亚马逊云科技 的数据架构师。Deepthi 专门研究亚马逊 RDS、Amazon Aurora 和 亚马逊云科技 DMS。她还是 亚马逊云科技 DMS 和亚马逊 RDS 的主题专家。在她的职位上,她帮助整个欧洲、中东和非洲地区的客户在 亚马逊云科技 上迁移、更新和优化数据库解决方案。

Deepthi Saina

是总部位于英国的 亚马逊云科技 的数据架构师。Deepthi 专门研究亚马逊 RDS、Amazon Aurora 和 亚马逊云科技 DMS。她还是 亚马逊云科技 DMS 和亚马逊 RDS 的主题专家。在她的职位上,她帮助整个欧洲、中东和非洲地区的客户在 亚马逊云科技 上迁移、更新和优化数据库解决方案。

Vivekananda Mohapatra 是亚马逊网络

服务 ProServe 团队的首席顾问。他在适用于甲骨文的亚马逊 RDS、适用于 PostgreSQL 的亚马逊 RDS、亚马逊 Aurora PostgreSQL 和亚马逊 Redshift 数据库的数据库开发和管理方面拥有深厚的专业知识。他还是 亚马逊云科技 DMS 的主题专家。他每天与客户紧密合作,帮助他们将数据库和应用程序迁移到 亚马逊云科技 并对其进行现代化改造。

Vivekananda Mohapatra 是亚马逊网络

服务 ProServe 团队的首席顾问。他在适用于甲骨文的亚马逊 RDS、适用于 PostgreSQL 的亚马逊 RDS、亚马逊 Aurora PostgreSQL 和亚马逊 Redshift 数据库的数据库开发和管理方面拥有深厚的专业知识。他还是 亚马逊云科技 DMS 的主题专家。他每天与客户紧密合作,帮助他们将数据库和应用程序迁移到 亚马逊云科技 并对其进行现代化改造。