我们使用机器学习技术将英文博客翻译为简体中文。您可以点击导航栏中的“中文(简体)”切换到英文版本。

用于运行以太坊验证器的 亚马逊云科技 Nitro Enclaves — 第 2 部分

在本系列 的

此外,我们还介绍了高级应用程序架构,并简要解释了安全引导和安全签名流程。

在

在这篇文章中,我们深入探讨了三个领域:

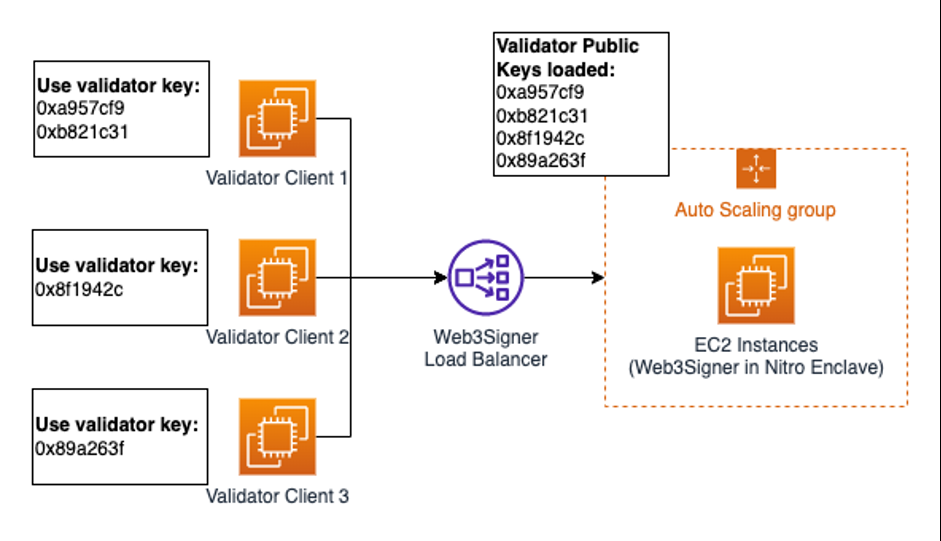

- Web3Signer 集成模式 — 如何将不同的以太坊验证器节点与单个 Web3Signer 节点集成

- 在 Nitro Enclave 内安全引导 Web3Signer — 如何以安全的方式将私钥等安全敏感配置文件注入 Nitro Enclave,以及如何将这些配置工件用于 HTTPS 端点

- 通过 vsock 公开 Web3Signer HTTPS API — 如何以透明的方式通过 vsock 连接传送传输层安全 (TLS) 流量,从而与在 Nitro Enclave 内运行的 Web3Signer 服务建立安全通信

与 Web3Signer 集成以进行以太坊验证

为了能够与 Web3Signer 集成,验证器客户端需要支持与

0xa957cf9 和

31,这意味着验证器客户端 2 不能再使用这两个密钥。取而代之的是,验证器客户端 2 使用密钥

0xb821

c

0x8f1942c

,任何验证器客户端都不使用该密钥。

在随附的

- 为每个验证器客户端生成证书- 使用证书创建已知的客户端 文件并将其加载到 Web3Signer 中。此方法操作复杂,但撤销是可能的,因为我们只需要修改 Web3Signer 中的已知客户端文件即可。

- 配置 Web3Signer 以允许拥有可信 CA 证书的验证器客户端进行连接- 此方法在操作上更容易;但是,需要维护私有证书颁发机构 (CA) 服务器来为每个验证器客户端颁发证书。在撰写本文时,撤销功能仍在开发中。

有关客户端身份验证的更多信息,请参阅

在 Nitro 飞地内安全启动 Web3Signer

要安全地在 Nitro Enclave 内运行 Web3Signer 服务,需要以下两个配置工件:

- 用于保护 Web3Signer HTTPS API 的 TLS 密钥对

- EIP-2335 指定的密钥库文件,包含加密的 BLS12-381 验证器私钥

这两个配置项目都需要通过对称的

用于对配置工件进行对称加密的 KMS

密钥需要配置密钥策略,以便授权使用加密认证从安全区内部发起的 KMS: Decrypt

操作。有关如何为该解决方案配置 KMS 密钥策略的更多详细信息,请参阅

在这个解决方案中,我们使用两个

(

validatorKeyTable)。

tlsKeyTable

使用以下架构:

validatorKeyTable 强制执行以下架构:

正如我们之前指出的那样,为了能够启动引导过程,需要通过对称 KMS 密钥对 TLS 密钥和 EIP-2335 密钥库文件进行加密,生成的密文需要存储在 DynamoDB 表中。

通过协调架构定义,可以启动不同的安全 Web3Signer 进程,每个进程都有一个唯一的 TLS 密钥和不同(>=1)的验证器密钥,只需传递一个 TLS key_id 和 Web3Signer

_UUID 密钥

数组作为引导过程的 参数即可。

下图描述了引导流程。

该流程包括八个步骤:

-

n监视程序进程从 DynamoDB 表中读取加密的 Web3Signer 配置资产(TLS 密钥、验证器密钥)。itro-signing-server systemd 服务启动 watchdog.py进程。 -

监视程序启动 Web3Signer 飞地。一旦安全区启动并运行,监视程序就会通过发送以下负载来调用

安全区的初始化 操作。encr@@ ypted_tls_key 和 encrypted_validator_keys 包含从 DynamoDB 表中下载的配置项目。证书代表监视程序收集的临时 AWS 安全证书。 -

在 Web3如果所有必需的参数都存在,则使用Signer 安全区内运行的 enclave_init 进程会验证传入的初始化请求,确保包含所有必需的参数 。kmstool-enclave-cli 来解密传递给 Web3Signer 安全区的 TLS 和验证器密钥。 -

kmstool-enclave-cli通过 vsock-proxy 与 亚马逊云科技 KMS 建立安全的出站连接 ,使用 加密认证来解密配置工件。 -

如果使用

加密认证成功执行 KMS: Decr ypt 操作,则解密后的文件将转换为正确的文件格式并写入安全区的内存文件系统。enclave_init 进程启动 Web3Signer进程。 - Web3Signer 进程读取配置文件,解密密钥库文件中的加密私钥,然后在安全区的本地主机接口上启动 HTTPS 侦听器。

-

验证器客户端必须将 Web3Signer 配置为其远程签名解决方案,例如

Lighthouse 远程签名 ,如本文前面所述。 -

正确配置后,验证器客户端可以连接到通过 https_proxy 公开的 Web3Signer HTTPS 端点。有关如何通过 vsock 连接对 HTTPS 流量进行隧道传输的更多详细信息,请参阅本文的下一节。

由于 Nitro Enclave 的内存文件系统的临时性质,每次停止并重启安全区或父

通过 vsock 公开 Web3Signer HTTPS API

Vsock 是 EC2 父实例与其安全区之间的本地通信通道。它是安全区可以用来与外部服务进行交互的唯一通信渠道。

要建立安全连接,例如使用 TLS、从父实例内部或从 亚马逊云科技 Lambda 函数与 EC2 父实例在同一 Amazon VPC 中运行的

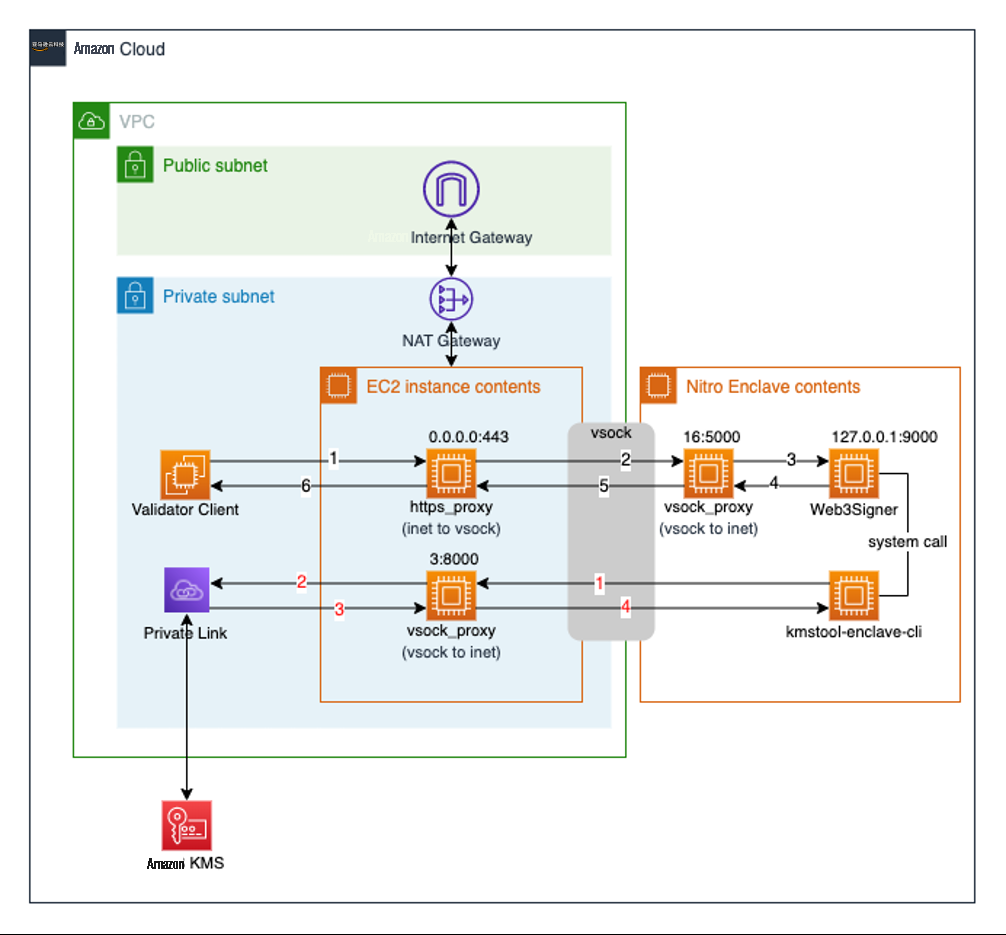

以下两节详细解释了如何使用 vsock 代理进程建立 HTTPS (TLS) 入站和出站连接。这些数字与下图中的不同状态相关联。

该图还描述了在 亚马逊云科技 CDK 部署过程中配置的所有相关组件的监听器/端口配置。

像 16:5000 这样的表示形式指的是

HTTPS 入站流

HTTPS 入站流程包含以下步骤:

-

在给定的解决方案中, https_proxy 就像一个 TCP 代理,在 AF_INET 和 AF_VSOCK 之间进行转换。 -

https_proxy 与一个单独的 vsock_proxy 进程 建立 vsock 连接,该进程在安全区内运行,监听 16:5000。然后,它通过此连接转发所有收到的 TCP 数据包。 -

对于每个传入的 vsock 连接,

vsock_proxy 都会通过 Web3Signer HTTPS API建立互联网 TCP 连接,后者在 127.0.0. 1:9000 上进行监听。 - Web3Signer 通过已建立的 TCP 连接进行响应。

-

收到 Web3Signer 响应后,vsock_proxy 通过已建立的 vsock 连接将 TCP 数据包 转发到在父实例上运行的 https_proxy。 -

https_proxy关闭 vsock 连接并将 TCP 包转发回请求客户端。

HTTPS 出站流

源自指向 亚马逊云科技

KMS 的 kmstool-enclave-cli 二进制文件的出站 HTTPS 连接使用的机制与前面解释的类

似。步骤如下:

-

kmstool-enclave-cli 与在 EC2 父实例上运行的 vsock_proxy 进程 建立了 vsock 连接,监听时间为 3:8000。 -

vsock_proxy 与 AWS KMS建立 TCP 连接。 -

亚马逊云科技 KMS 通过已建立的 TCP 连接响应

vsock_proxy。 -

vsock_proxy通过开放的 vsock 连接将 亚马逊云科技 KMS 的响应 转发到安全区。kmstool-enclave-cli 关闭了 vsock 连接。

使用这种机制,可以将 HTTPS 请求安全地代理进出安全区。

需要指出的是,用于验证 Web3Signer 端点的 X509 证书需要将父 EC2 实例的主机名设置为 CN,否则主机名验证将失败。

此外,使用这种 HTTPS 隧道机制并让 Web3Signer 在 Nitro Enclave 内停止 TLS 会话可能会由于加密操作而导致 CPU 负载增加。

除了保护 Web3Signer 在 Nitro Enclave 内所需的验证器私钥等敏感配置负载外,还通过公开 Web3Signer HTTPS API 引入了新的潜在攻击向量。由于 HTTPS 端点暴露在

您可以使用不同的工具和开源库通过 vsock 对 TLS 连接进行隧道传输:

-

traffic-forwarder.py —亚马逊云科技 Nitro Enclaves 研讨 会中使用的脚本 -

viproxy — GitHub 上的开源 Go(lang)库 -

socat — 一款多功能 Linux 网络实用程序

结论

在这篇文章中,我们深入解释了如何将 Web3Signer 与验证器客户端集成。我们还深入探讨了安全的引导过程,最后详细解释了如何通过 vsock 公开 Web3Signer HTTPS API。

您可以自定义

作者简介

David-Paul Dornseifer

是 亚马逊云科技 全球专业解决方案架构师组织的一名区块链架构师。他专注于帮助客户设计、部署和扩展端到端的区块链解决方案。

David-Paul Dornseifer

是 亚马逊云科技 全球专业解决方案架构师组织的一名区块链架构师。他专注于帮助客户设计、部署和扩展端到端的区块链解决方案。

Aldred Hal im

是 亚马逊云科技 全球专业解决方案架构师组织的解决方案架构师。他与客户紧密合作,设计架构和构建组件,以确保在 亚马逊云科技 上成功运行区块链工作负载。

Aldred Hal im

是 亚马逊云科技 全球专业解决方案架构师组织的解决方案架构师。他与客户紧密合作,设计架构和构建组件,以确保在 亚马逊云科技 上成功运行区块链工作负载。

*前述特定亚马逊云科技生成式人工智能相关的服务仅在亚马逊云科技海外区域可用,亚马逊云科技中国仅为帮助您发展海外业务和/或了解行业前沿技术选择推荐该服务。